For my final, i want to combine musical performance with visuals, both produced algorithmically. I will generate a score for 2 guitars, based on an as-yet undecided algorithm. The guitars will run through a digital effects processor (not necessarily integral to the project at hand, other than for aesthetic purposes), to a pitch to midi converter, and then into max, where the midi data will be sent out again to processing. Once in processing, a real-time graphic display will be updated.

i am investigating the max object fiddle~ and the processing library maxLink for this. I will be sending midiout from max to my processing sketch via maxLink.

I am still nailing down the details on how i would like to proceed. It is my first time using max, performing publically playing guitar, and doing live image processing, so there should be much to learn and post about along the way!

Monday, November 27, 2006

Friday, November 10, 2006

Living Utensils

For my final intro to physical computing project, I'm making a set of utensils that respond to the user. I have a quick summary about it here:

Project Statement

and a quicktime here.

More updates to come soon.

Project Statement

and a quicktime here.

More updates to come soon.

lab: week 9, midi out

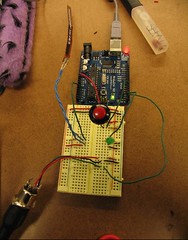

This lab involves building a circuit that makes it possible to send midi out a midi jack. The push button toggles the note on or off, and the flex sensor selects the note you are sending. The midi cable is connected to a box that connects to my laptop. I'm playing whatever notes i generate through garageband.

This was a pretty straight-forward lab, although i did encounter one issue. All of the notes that were coming out of garageband were super soft. I had all of my volume levels up, yet I could barely hear the notes coming out. This was probably due to my velocity though.

This was a pretty straight-forward lab, although i did encounter one issue. All of the notes that were coming out of garageband were super soft. I had all of my volume levels up, yet I could barely hear the notes coming out. This was probably due to my velocity though.

Subscribe to:

Posts (Atom)